Read Part 1 First

If you haven’t checked out Part 1 of this article, I encourage you to do so as it discusses two of the most important design considerations when developing experiences in virtual reality, how to avoid creating experiences that induce motion sickness and the importance of on-boarding. That being said, let us dive into some additional design considerations including tool placement, binaural audio and the virtual body ownership illusion.

Tool Placement

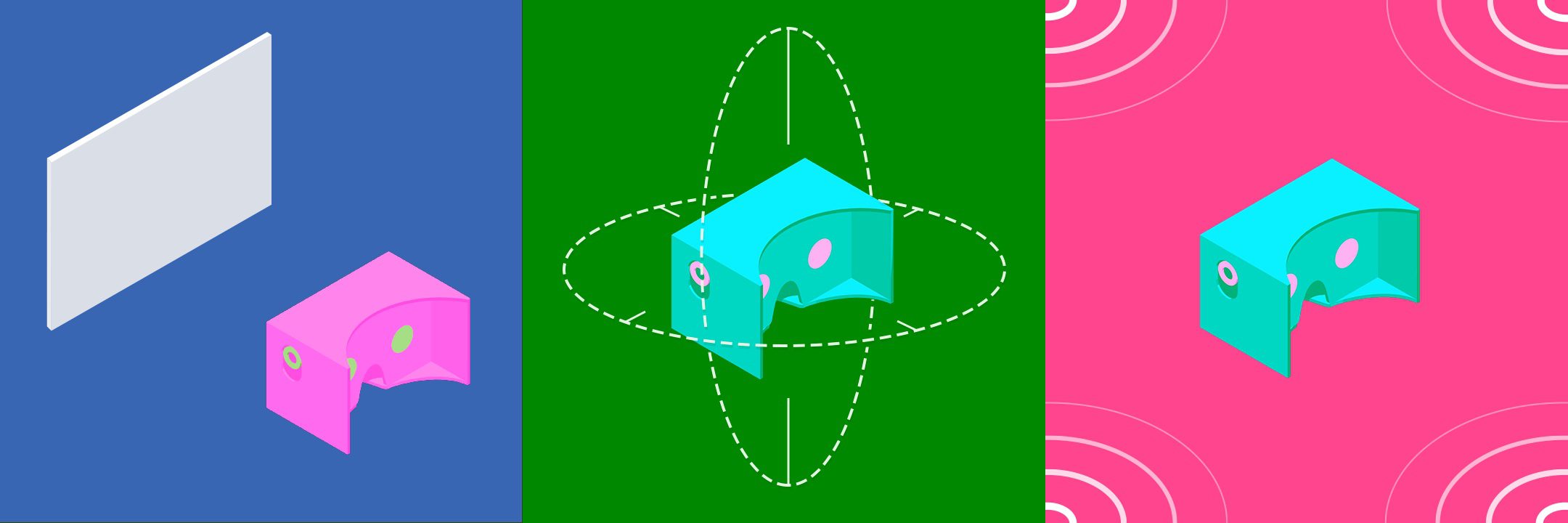

This is one of the most important and interesting design patterns that make designing for virtual reality different than designing for non-VR, 2D games. In traditional 2D games, user interfaces are typically a layer between the user (you) and the game world. In these 2D experiences, it could be a bad experience, and even frustrating, if the user was constantly having to look down to grab guns or perform other actions by moving the camera every which way. However, when you are the character in virtual reality experiences, it is much more natural to pull tools from behind your back or look down to your toolbelt to grab your next weapon or device vs. having a display floating in your view, obstructing objects and possibly inducing motion sickness (see motion sickness in the previous article). Virtual reality experiences are about immersion and thus, the better you can simulate reality, the more likely you are to keep users immersed in your experience. In reality, you would be much more likely to pull an arrow from your back than to select it from some floating menu. Good VR UI should make you feel powerful, it gives you information that you need to make decisions that are enacted in the game world and that feel natural. One design philosophy that supports this “natural feel” is known as a diegetic interface philosophy. In this philosophy, elements appear both for the characters in the fiction and for the audience. One example of a 2D game that follows this, to elicit a more realistic feel, is Dead Space. You can read more about their philosophy here. Contrast this with the interface in Mass Effect and you’ll clearly see the difference in approaches.

Some good virtual reality experiences that make use of the body as the placement of tools and utilities is Space Pirate Trainer, Tilt Brush, and Holopoint. In Tilt Brush, the tools and canvases are always in your hands. In Holopoint, you must draw arrows from a quiver behind you like you would in real life, by reaching over your shoulder and grasping an arrow (pulling the trigger in the game). Hover Junkers is another VR game that introduces some of these patterns but in a confusing way. Each gun has unique interactions for reloading that quickly get confusing. Remember, even if you create an immersive experience, the goal is still to create a FUN and ENGAGING experience. Too much cognitive load and confusing user interactions can get overwhelming quickly and suck all the fun out of it.

Well, one of the major differences between creating experiences in VR vs. traditional software has to do with the use of user interfaces. Since the arrival of the first iPhone, we’ve become very good at telling stories through the medium of a window into a world, and that world can be amazingly well realized, but you’re still viewing it through this very narrow window, whether thats your computer screen or mobile device. The UI has primarily acted as a layer between you and the game world that makes your interaction with the game world work. But in VR, it’s very different. With VR you are now immersed in this world, you are a living, breathing part of the world. It’s tempting to approach UI in VR in the same way as we’ve done in the past but that doesn’t work.

Binaural Audio

Have you ever watched a scary movie with the sound off? Of course not! And if you have, you’ve probably noticed that the movie isn’t all that scary is it? That’s because it’s difficult to fully communicate a narrative and truly get a viewer immersed in an experience that has no sound. Sound design is powerful in traditional experiences (movies, games, etc) but can be even more powerful in Virtual Reality thanks to the fact that you are INSIDE the environment. By feeding our ears different inputs (binaural audio) in VR to capture 360 degrees of sound, we can give an idea of where a sound is coming from. In a 2D game, a monster can still make you jump with visuals and a quick unexpected sound, but the experience is 10x that in a virtual reality experience. Thanks to positional tracking that comes standard in head mounted displays, it is much easier to create 360 sound that is generated on the fly as a user looks around their environment. Allowing you to create a new level of suspense and immersion as you progress the user through your narrative. Real-time 3D audio and environmental modeling engines are coming out every day (see Two Big Ears joining Facebook). Hearing sounds above, below, behind or any point in space around you over any pair of headphones will create experiences in virtual reality that traditional 2D experiences have no chance competing with. Virtual reality is not only ushering in a new era of visual storytelling but prompting the smartest minds to develop software that will take audio immersion to the next level.

Hearing sounds above, below, behind or any point in space around yoU will create experiences in VR that traditional 2D experiences Can’t competing with

Virtual Body Ownership Illusion

This “is the illusion of owning either a part of a body or an entire body other than one’s own…” and “it can be induced experimentally by manipulating the visual perspective of the subject and also supplying visual and sensory signals which correlate to the subject’s body. For it to occur, bottom-up perceptual mechanisms, such as the input of visual information, must override top-down knowledge that the certain body (or part) does not belong. This is what results in an illusion of transfer of body ownership. It is typically induced using virtual reality.” (Wikipedia) Simply said, all of the senses of the body contribute to this virtual body ownership illusion.

“I am predicting a new era of avatarism enabled by virtual reality.”

This illusion has the potential to be one of the coolest things in virtual reality. I am predicting a new era of avatarism enabled by virtual reality. With the unique experiences that virtual reality offers, users will have a new affinity for their characters in games. Why? Because in virtual reality they don’t just watch their characters, THEY ARE THE CHARACTER. Imagine harnessing this power to create health and fitness applications. Do you want a stronger avatar? Then you need to put in the time and build your own body as you build your avatar.

Keeping Up

As designers, we can’t necessarily control the speed at which new input devices and hardware hit the market, but we can be cognizant of how they all fit into the bigger picture and how we can use them to create richer experiences. Challenge yourself to think of your 5 senses and how you might enhance each of these with what virtual reality enables. With sight, the head mounted display already gives you a 3D environment to manipulate but, as mentioned earlier, that doesn’t mean we can simply port 2D experiences over.

Give users control of their navigation, use rest frames when necessary, limit the fidelity of your experience for the systems that can handle it, design UI that avoids creating the binocular-occlusion conflict and study the other considerations that can affect the user. With the sense of hearing, look into using 360 audio plugins that work with Unity and the Unreal Engine to effectively create realistic audio in your environment. With touch, there are new devices coming out every day that tout haptic response and feedback, but none have widespread adoption.

Setup Google alerts and follow the conversation of haptic hardware for VR so you are ahead of the curve and can these to create new experiences and further create a virtual body ownership illusion. Taste and smell are still a ways off (I think) but again, make sure you set up alerts that keep you ahead of the curve. The closer you can get to taking advantage of the hardware to effectively target these senses, the closer you get to creating a true virtual body ownership illusion and the closer you get to creating a truly immersive virtual reality experience.

Putting it all together

By just combining a few of these principles, you will be well on your way to creating the next virtual reality experience that people rave about. But when we create the next generation of experiences, we have a choice. Are we going to create the time-sucking experiences of today, that leave people more entertained but also more overweight and unhealthy, or are we going to create experiences that truly improve lives? We shouldn’t be striving to create a virtual reality dystopia where people plug in and forget the real world. We should be creating experiences that improve education, treat disorders, and improve the overall health and wellness of people to enjoy in the REAL WORLD.

Having lead the development of software products at Bodybuilding.com for 7+ years, I have been striving to create experiences that will improve the overall health and fitness of users all over the world. We were successful using traditional software like workout tracking apps. We also created BodySpace, the largest health and fitness social community. Even given these tools, it was still difficult to get people to follow through with their commitments and stick to their goals. Leaving Bodybuilding.com to found Black Box was a difficult decision but, with virtual reality, I believe we can create next generation experiences that combine principles in this article, room-scale environments, and innovative resistance systems to truly help reduce the obesity epidemic and change the course of humanity for the better. I hope you’ll join me!

Comments are closed.